Updated 2025/02/20: Fake frames by Gamers Nexus: a bit on DLSS 4.

Updated 2023/01/03: Added a bit about Frieren EP9.

On YouTube there’s always someone using AI to upscale to 4K and interpolate frames in anime openings. Some people think it looks good because it “moves” more and they are wrong.

I like referencing the video of Noodle on Smoother animation ≠ Better animation. You do not need to watch this video to understand this post but I still recommend watching it as Noodle is an actual animator as I don’t plan on just repeating what this video says.

I’ll be using my own words and examples.

What is upscaling?

When it comes to images and videos upscaling is increasing the dimension of the frame. To illustrate this example let’s take a 102 by 102 pixel image and upscale it using Photoshop and Waifu2x:

Doubling the image’s dimension does harm the quality of the image by doubling and softening the pixels. The 4 times image is unusable and down right bad.

Waifu2x uses a deep convolutional neural network to try minimize the quality loss and retain the detail from the original image. It works quite nicely bit but it far from perfect. Other AI enhancements can be used to upscale images.

In all the illustrated cases we are taking an image that is 102 pixels by 102 and adding more pixels. The only thing we can do is thus interpolating.

What is interpolating?

Interpolation is a very complicated subject that can be basically watered down to estimating new data based on existing data.

In the case of this post I already referenced interpolating pixels to fill in the blanks when upscaling an image. If we took an image and did not interpolate pixels we would retain the original pixels but spaced out in a grid like this:

Since I’m increasing the size from 102 by 102 pixels to 408 by 408 pixels I’ve blacked out the pixels that will have to be estimated. Different sharpening and scaling algorithms will process the images differently and alter the output.

This example is only illustrating what is missing and what needs to be generated.

The same can be explained for increasing the framerate from 23.976 to 60FPS. Wait a second, 23.976FPS to 60FPS. Decimal numbers?

That’s a fun framerate to multiply to 60. Where are my comfy integer numbers? I like whole numbers. Anything that floats is complicated and scary.

Why interpolating DOESN’T work?

If upscaling images with AI can work pretty well, on the other hand the frame rate interpolation doesn’t work.

First of all the video frame rate needs to be constant, if it’s defined as 23.976FPS we would need multiply it by 2.5 to reach 59.94FPS to get as close to 60FPS as possible but it is not a whole number so we will need to alter existing frames while generating 1.5 frames for each existing frames.

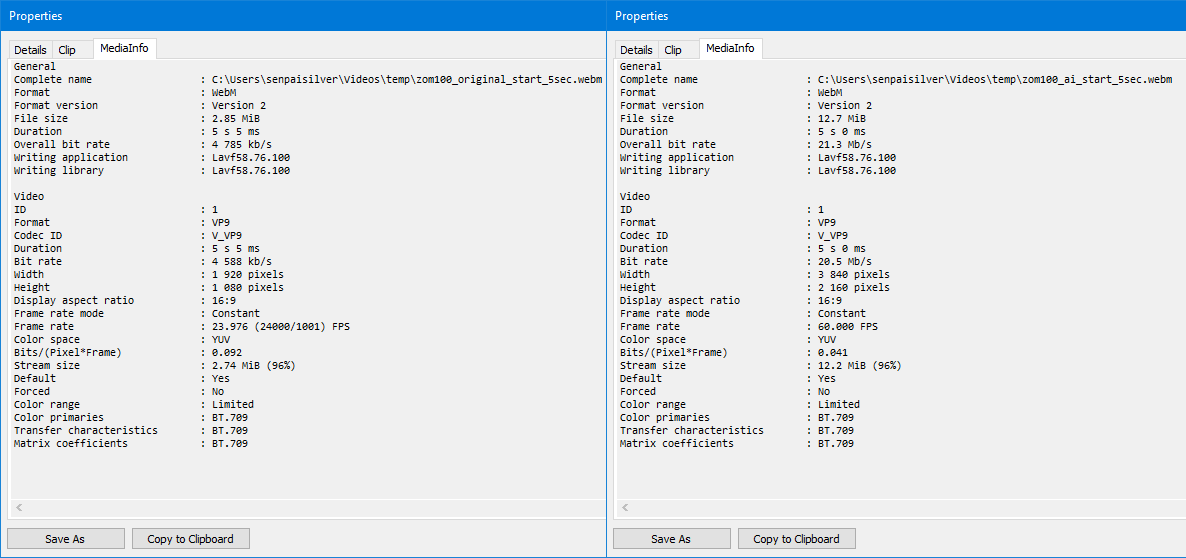

And this breaks everything. Here are two 5 seconds sequences taken from the opening of Zom 100 on YouTube.

Original video:

AI enhanced video:

Let’s get the total number of frames the lazy way:

ffprobe -v error -select_streams v:0 -count_packets \

-show_entries stream=nb_read_packets -of csv=p=0 \

zom100_original_start_5sec.webm

# 127

ffprobe -v error -select_streams v:0 -count_packets \

-show_entries stream=nb_read_packets -of csv=p=0 \

zom100_ai_start_5sec.webm

# 323

If my math is right 323 / 127 = 2.543307086614173 making us close to the 2.5 multiplier. But let’s forget numbers because what really counts is the visuals.

Why is it ugly?

The gist of it is that we a creating new frames based on the previous and next frame while also altering existing frames to target the 60FPS that is being uploaded.

Let’s take a look frame by frame, each origin frame lasts for 2 seconds while AI enhanced frames last for 1/3 of a second. Left and right video isn’t synchronized as the frame rate cannot be divided by an integer value.

Obvious artifacting appears very early on around anything that moves. Sometimes it’s just blurring just like if motion blur (on the bike) was introduced and sometimes the shapes are deformed (such as the numbers).

Another example:

The upscaled image is also sharpened and denoised to some degree which ends up messing up the contrast on the line art.

But 60FPS is nice when gaming

Yes, when playing 3D games each frame is rendered before being displayed on the screen. Videos are different because each frames are already rendered.

This is like the difference between pre-rendered cutscenes and in-engine cutscenes. Pre-rendered cutscenes are designed in a way to be of a certain resolution, frame rate, compression and colorspace.

In-engine cutscenes on the other hand will usually target the game’s resolution, framerate, colorspace without applying any compression. Usually because some game engines will lock the frame rate to a lower value such as 30FPS because of engine limitations bad engine programming and design.

Rendering 30, 60, 120 or 144 frames a second by taking a picture of a 3D scene that is built right before being displayed is the key to fluidity.

Already rendered content will never be able to do such thing because of the missing information.

Real world example from 60 to 23.976 to 60

Here is NieR Automata at 60FPS:

Reducing the frame rate to 23.976FPS makes the motion look less satisfying:

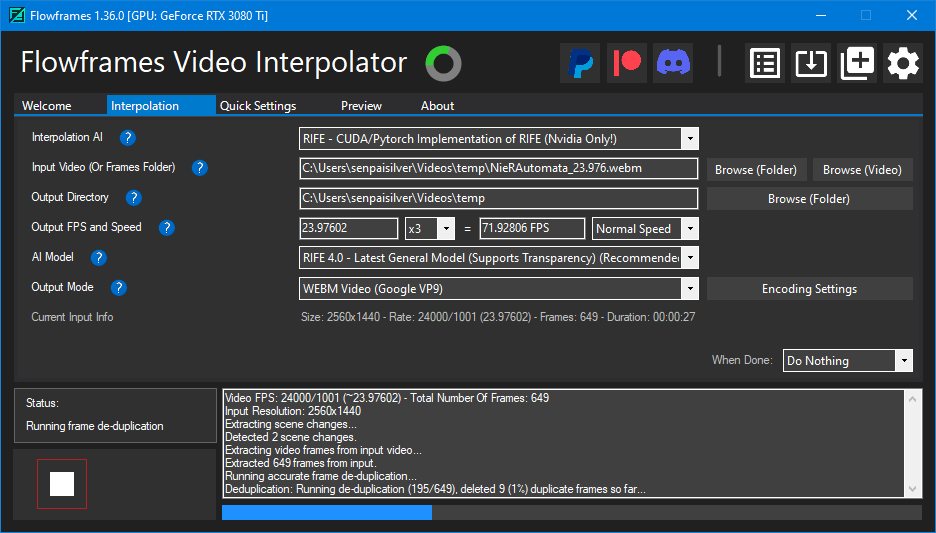

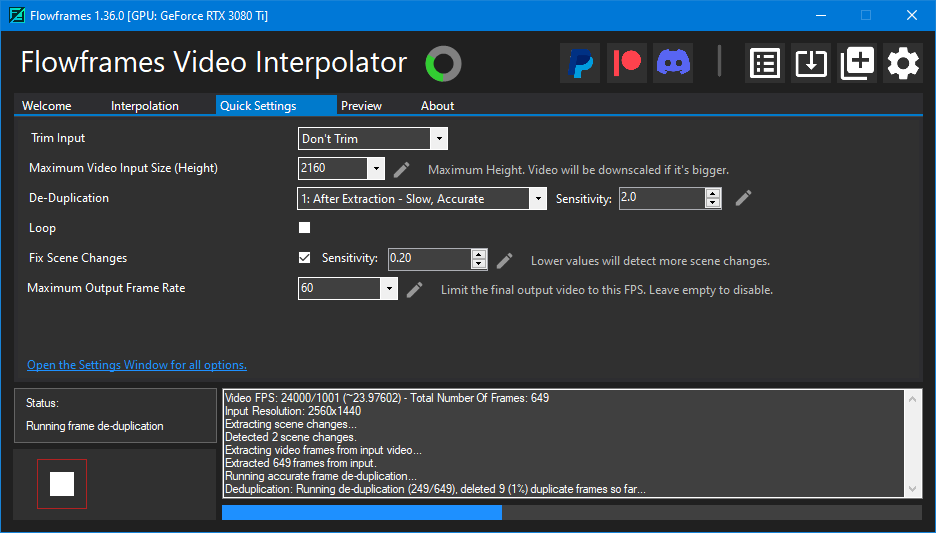

Now let’s interpolate the framerate to 71.92806FPS and limit it to 60FPS with Flowframes that permits me to double or triple the frame rate (not set it to 60 directly) and notice how the video is choppy:

Here are the settings used:

This is exactly what is being done to those anime openings… Disgusting.

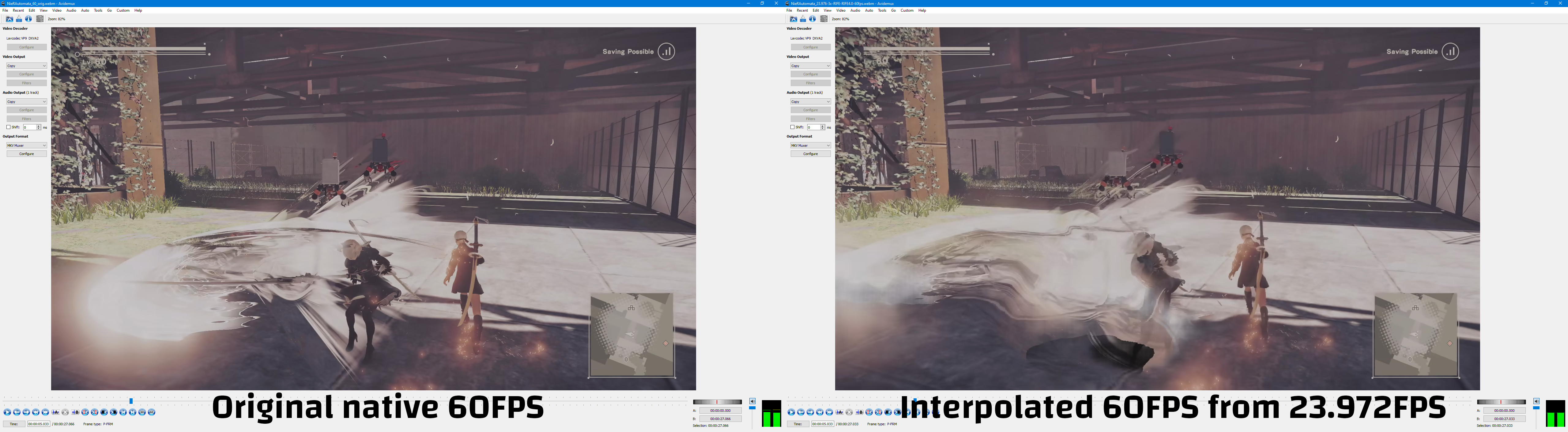

Let’s compare the original native 60FPS footage with the interpolated to 60FPS from 23.976FPS:

The screenshots talk by themselves and expression the difference in a much more obvious way, but let’s go a bit further and slow down the video clips while having them side by side and centered on 2B:

Slowed down to 25% does show how bad it gets. Same goes for that Jujutsu Kaisen S2 opening:

It jitters, the text is deformed… I hate it. Anime is not drawn for 60FPS.

Misguided demande exists

Sadly TVs are being sold with some smoothing technology and marketed as good looking. Not sure how people really perceive it as something nice while it should be weirding them out.

I also remember that the Hobbit being 48FPS at the movie theatre was something that did weird out people quite a bit, but was it because of the 3D effect included too? No idea.

NVidia is also working on adding frame rate smoothing to their GPUs when encoding, weird idea…

The reason I made this post is that I’m tired of seeing openings such as Zom100 and Jujutsu Kaisen S2 being upscaled, sharpened to hell and then interpolated to get weird motion and no improvement.

Conclusion

Most people that aim for +60FPS content do so because fluidity is important and enhances the pleasure of the visuals. Sadly most people don’t seem to actually pay attention to the composition and missout on the botched detail and weird movements.

Do I envy people that are not able to perceive what’s wrong with frame rate interpolating? No I don’t. I just think it’s sad that they are missing out on the destroyed detail.

This trend of upscaling and interpolating the framerate will not die anytime soon and this makes me sad because we have some very nice animations that are design for low framerate and will only work like so.

Spoilers ahead but here are some nice animations:

- Psycho Pass (movie);

- Tengoku Daimakyou EP1;

- Darker than Black Ryuusei no Gemini;

- Shingeki no Kyojin.

In all of the above scenes we have animations that are not 60FPS and do not need to be. Motion is properly conveyed through direction and effects.

As a closing note I suggest watching Satoshi Kon Editing Space & Time by Every Frame a Painting. At the 4:47 mark they talk about motion, it’s interesting to see how animation can convey more actions with less frames than live action.

Sousou no Frieren EP9

If Japanese animation proved anything in 2023 it’s that anime still doesn’t need to by high framerate. In Sousou no Frieren episode 9 we have multiple fights happening at the same time in the second half of the episode and the animation is perfect.

The fights are either fast paced and smooth or detailed and smooth. No frame is out of place. This is the standard of animation that we would expect from a full blown movie.

Since it’s copyrighted and I don’t want to stretch faire use (especially because Japan doesn’t really practice that) I will only be posting one clip.

Mad House published some tweets to show the behind the scenes for the keyframes:

TVアニメ『葬送のフリーレン』

ご視聴ありがとうございました✨🪄線撮紹介🪄

第9話「断頭台のアウラ」

フェルンvsリュグナー

その①担当:藤本航己さん#フリーレン #frieren pic.twitter.com/owQtcRpM1W

— MADHOUSE Inc. (@Madhouse_News) November 3, 2023

Those keyframes are so clean the finished version can only be the best:

Full animated scene from Sakugabooru.

Fake Frame Image Quality: DLSS 4 & MFG

Gamers Nexus did investigate what they call fake frames and I think it has value since frame generation is being pushed in gaming harder than ever since 2024.